Who are your agents working for?

Convenience got us here. It might also get us out.

Like most of us, I’ve traded my privacy for convenience many times over the past few decades. But I’m starting to wonder if handing my life over to agents will finally force me to reconsider.

A lot of us are setting up our own OpenClaw, Hermes, Pi, or Cowork agents. As we customize and personalize these agents, we’re making privacy-related decisions ranging from completely air-gapped Mac Minis to full blown YOLO. A friend of mine recently set up a machine that has virtually no real information about her within reach of the device. Another resigned himself to the fact that his agents will become his virtual twins and gave them access to all his personal accounts, credit cards, etc.

I’m not sure where I stand, or where I’ll end up. But it’s clear to me that we’re headed towards a world that looks very different than the one a decade ago, when my social security number was leaked and subsequently used to sign up for a dozen different Verizon Wireless accounts. At the time, I couldn’t imagine a worse outcome of a security breach.

But now, I’m intentionally installing what is effectively spyware on my computers. I’m having my agents handle increasingly personal tasks for me. Buying things. Messaging friends and family members. Helping me with important career decisions. I’m handing them more of my private information and more of my agency than the me from the Verizon incident would have ever dreamed possible.

As a VC, I’m naturally wondering what this means for the future of personal and enterprise computing and where the opportunities lie, as are my partners at USV. We’re talking about it a lot.

The obvious answer is that Anthropic and OpenAI will build more powerful harnesses that will enable them to have even better models, which will unlock even better harnesses, and so on. We, as normal convenience-happy customers, will hand over more and more of our personal data. A few months ago, Ben Thompson made a strong case for this outcome (specifically that the labs that control both the model and the harness will win) and I believe there’s a good chance he will be right. But might the complete opposite thing happen?

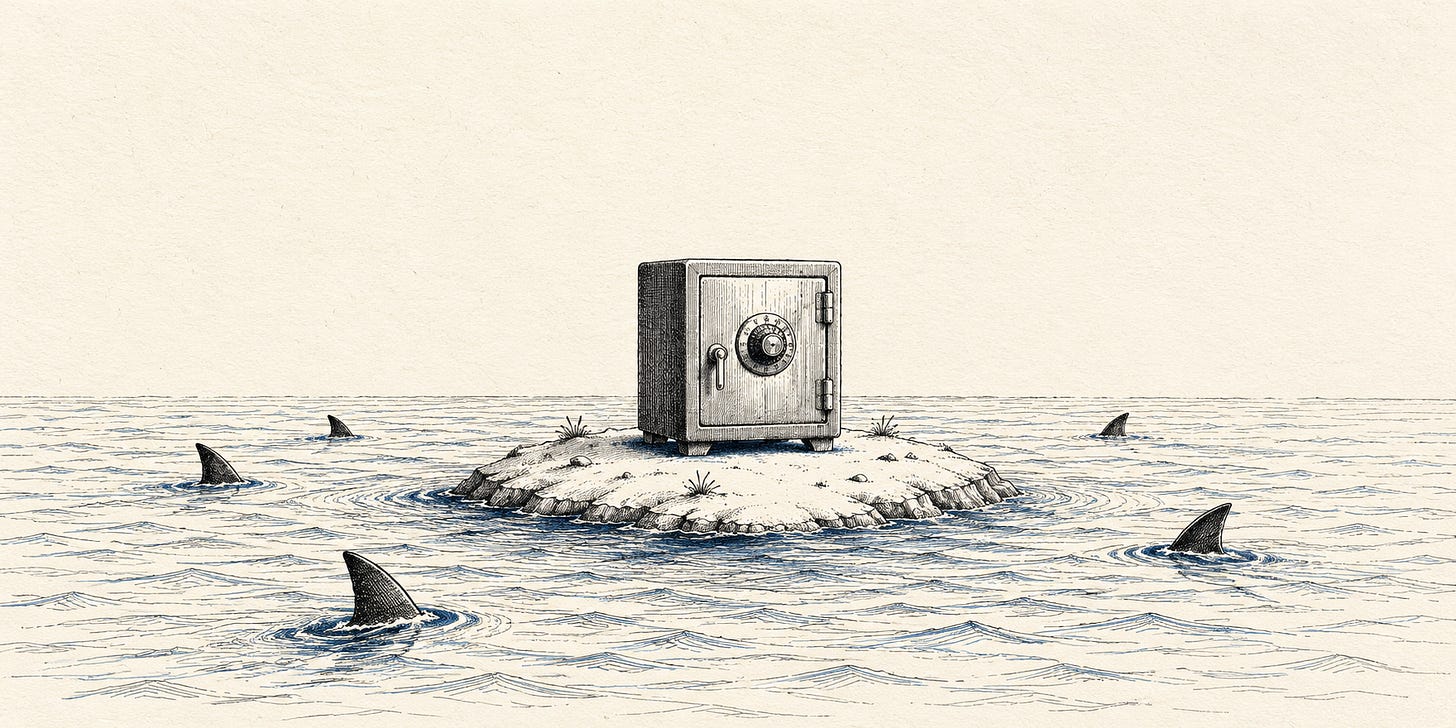

The contrarian bet would go something like this: people will eventually understand that their most sensitive context is sitting in someone else’s data center, training someone else’s next model, subject to someone else’s terms of service, and maybe used to do real things we never asked to be done. The same way people eventually demanded control over their health data and their financial data, they may eventually (finally?) demand control over their digital footprint.

The more power people concede to their agents, the more they'll want to know they can trust them. But it won't just be about trust or security. It will also, just like before, be about convenience. People didn't demand health data portability solely because they cared about data rights in the abstract. They demanded it because they switched doctors and their records didn't follow them and it was a pain in the ass. There may be a version of that moment coming for agents.

When that happens, the value won’t accrue to the lab. Instead, it will accrue to whoever provides the user-controlled memory substrate that is both trusted and portable. One of my partners at USV believes that this is where the world is headed, and I’m increasingly coming around to that future, too.

But I’m not even sure I want that future. After all, convenience always wins. And the easier path is to keep handing things over and assume the incentives eventually align. But I’m spending enough time with agents now that I’m starting to wonder. At some point, I’d like to know who they’re really working for.