Moats: Memory vs. Customization

Notes on memory, harnesses, and where lock-in really lives.

Like many others, we’re spending a lot of time at USV talking about where moats will accumulate for AI companies. And while we don’t all agree yet, it’s been a helpful exercise, as well as a fun one (because as a byproduct, we all experiment a lot with various models, agent platforms, harnesses, etc).

For a while, it was very en vogue to say things like “memory is the moat” in X posts, on podcasts, etc. That never really resonated with me, personally. Any memory that a model has about you can actually be easily ported, because memories are just facts. If you get a model to spit out the facts, you can just take those facts and put them into another model. A great example of this was a few months ago when the Claude app overtook ChatGPT in the App Store. To help make it easier for users to switch over, Anthropic released a prompt that got ChatGPT to spit out everything it knew about you. I tried it out and it worked perfectly.

But maybe something similar to memory is sticky. Not memory of facts but memory of customizations, workflows, tools, skills, etc.

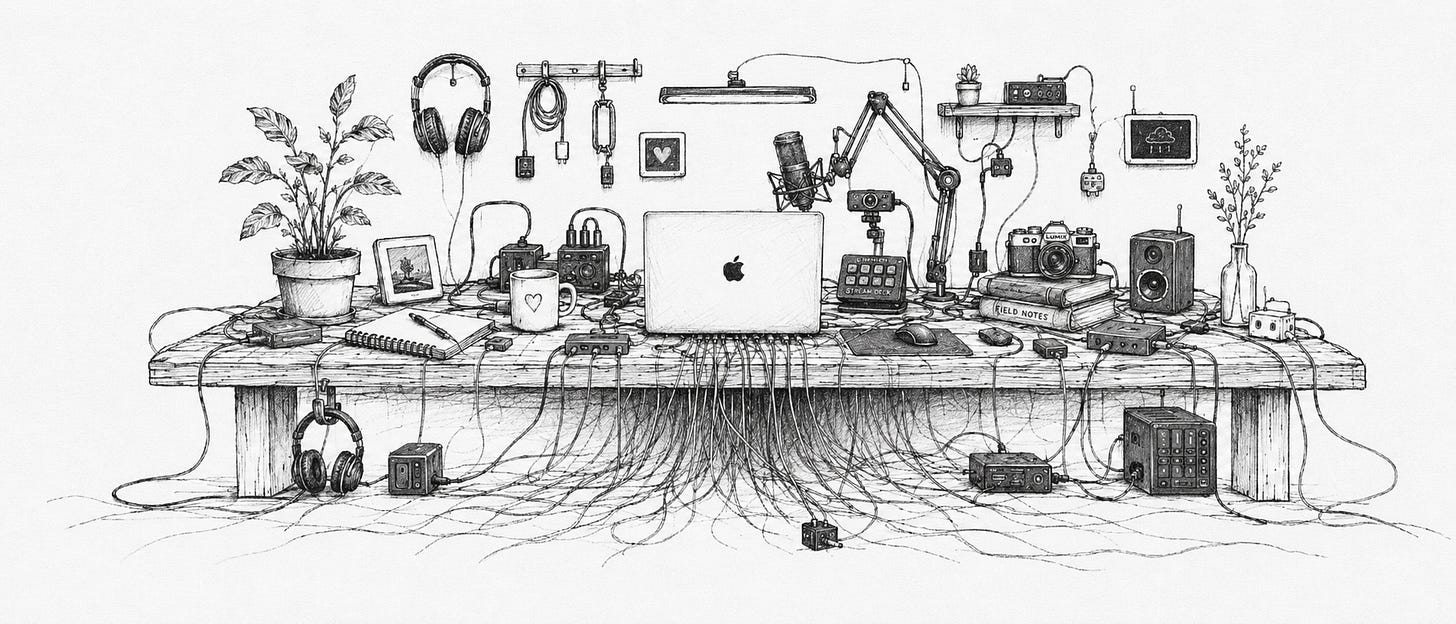

The more I customize my Claude desktop app, the stickier it becomes. A small part of that may be memories about me. But the bigger part is the way I’ve customized my set up: my nutritionist project, my doctor project, all of the various skills I’ve either written or imported, the workflows that I’ve chained together that Claude can go and execute on once I ask questions. This is arguably even more powerful in something like agent hosting platforms such as Tasklet, where the volume of customizations is much greater, because they do so much more for you than the Claude desktop app. Tasklet can just go call a tool or host your mini app on a server somewhere easily, and all of those bells and whistles even further entrench you.

And yet OpenClaw, Hermes, and similar harnesses take customization (and therefore, potentially lock-in) to a whole new level. There are infinite permutations for setting up your OpenClaw instance. And Hermes has workflows that are self-learning and codify themselves as you execute them, so that’s another layer of customization that happens automatically.

So where I net out on this (subject to change, of course): I think the customizations that get deeply embedded into a harness are the moat. The more you invest in the harness from a personal standpoint, the stickier it is, and the harder it is to leave.

On the other hand... maybe I’m completely wrong about memory not being a moat. The more a model knows about me, the more it can proactively address my needs. While we haven’t yet seen a breakthrough of “proactive” agent use cases out of the box in Claude, ChatGPT, etc, I suspect we will eventually. And the more the models know about you, the better they’ll be at proactively providing us with the best overall experience. That sounds pretty sticky.

really sharp framing on customizations as the moat, this is exactly what we'd want to track in our briefings. would love to add this lens when we map AI services moats across the funds we follow